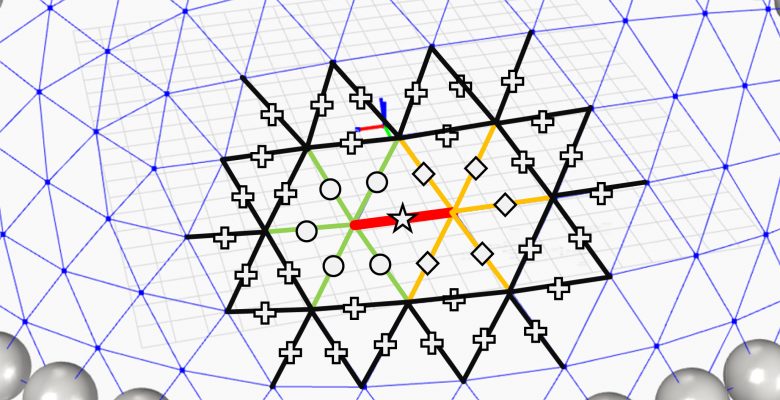

As computational design space exploration methods become more common, designers need increasingly faster ways to accurately explore increasing vaster design spaces. Surrogate models, often constructed with Neural Networks (NN) are statistical approximations of domains that can return accurate enough answers at a fraction of the time needed to run simulation models. However, existing neural network (NN) models that predict finite element analysis (FEA) of 3D trusses are not generalizable. For example, if a model is designed for a ten-bar truss, it cannot accurately predict the analysis results of a 12-bar truss. Such changes require new sample data and model retraining, reducing the time-saving value of the approach. This paper introduces Generalizable Surrogate Models (GSMs) that use a set of feature descriptors of physical structures to aggregate analysis data from various structures, enabling a more general model that predicts performance for a variety of geometric class, topology and boundary conditions. The paper presents training of generalizable models on parametric dome, wall, and slab structures, and demonstrates the accuracy and generalizability of these GSMs compared to traditional NNs. Results demonstrate first how to combine and use analysis data from various structures to predict the performance of the members of structures of the same class with different topology and boundary conditions. The results further demonstrate how these GSMs more closely predict FEA results than NN models exclusively created for a specific structure. The methodology of this study can be adopted by researchers and engineers to create predictive models for approximation of FEA.